| Frame Rate Number | Frame Rate |

|---|---|

| 0 | 1.875 fps |

| 1 | 3.75 fps |

| 2 | 7.5 fps |

| 3 | 15 fps |

| 4 | 30 fps |

| 5 | 60 fps |

| 6 | 120 fps |

| 7 | 240 fps |

Note that all these rates are supported by Format 0 (although the camera might not support them!) but sometimes Frame Rate 0,1,6,7 are not supported for all the modes in formats 1 and 2. See the DCAM spec [pdf] for details.

In addition there is also Format 7 which allow the precise control over the video. It can control video size, binning, colour models etc. This is really useful for cameras with non-standard sensor sizes and in CMOS cameras where regions of interest can be specified.

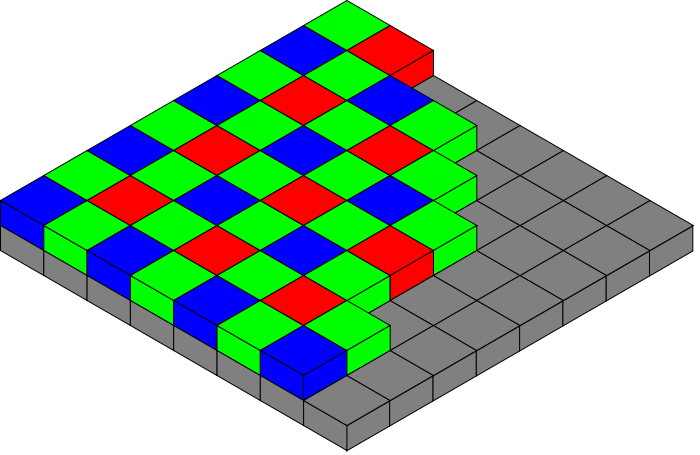

The description in the table above takes some explaining. CCDs and CMOS sensors are monochromatic. By themselves they can not differentiate colours. To do that filters need to be added. There are two main ways of doing this:

- have three sensors with three separate red (R), green (G) and blue (B) filters. This gives the best result but is the most expensive; it requires three sensors and additional optics to line them up correctly

- have a filter array that alternates red green and blue placed on top of a single sensor.

The second option is by far the most common and is know as a Bayer filter. So if our image is 640x480 and RGB, does that mean the sensor has 640x480x3 pixels? Sadly the answer is no. The sensor has 640x480 pixels and the colours are interpolated by looking at the surrounding pixels. You need to be aware of this if you are doing colour image processing.

The human eye is better at detecting grey level (luma) changes than it is at seeing colour changes. The camera transmission protocols take advantage of this. The YUV is a colour space widely using in TV transmission to compress the data. The Y is the luma value and there are two colour or chormiance components (U and V). Since the human eye has poor colour resolution, the Y value can be transmitted at a higher data rate than the colours. So YUV 4:4:4 means for every four Y components, four U and four V are transmitted. 4:2:2 means half of the colour data has been discarded and 4:1:1 mean only one U and one V are transmitted for every four luma values. This way the data has been compressed.

To get RGB colour back, the data has to be again interpolated - this is two lots of interpolation of the colour data now so expect errors! If you are working with colour image processing I would avoid YUV 4:2:2 and 4:1:1 modes. Many cheap webcams appear to transmit in these two modes.